2025

An AI-powered design reference system that connects intelligent image tagging with automated client briefings.

Design studios face two recurring pain points:

Amplifier is two connected systems:

The tagger builds the library. The briefing tool makes it useful.

Client (React)

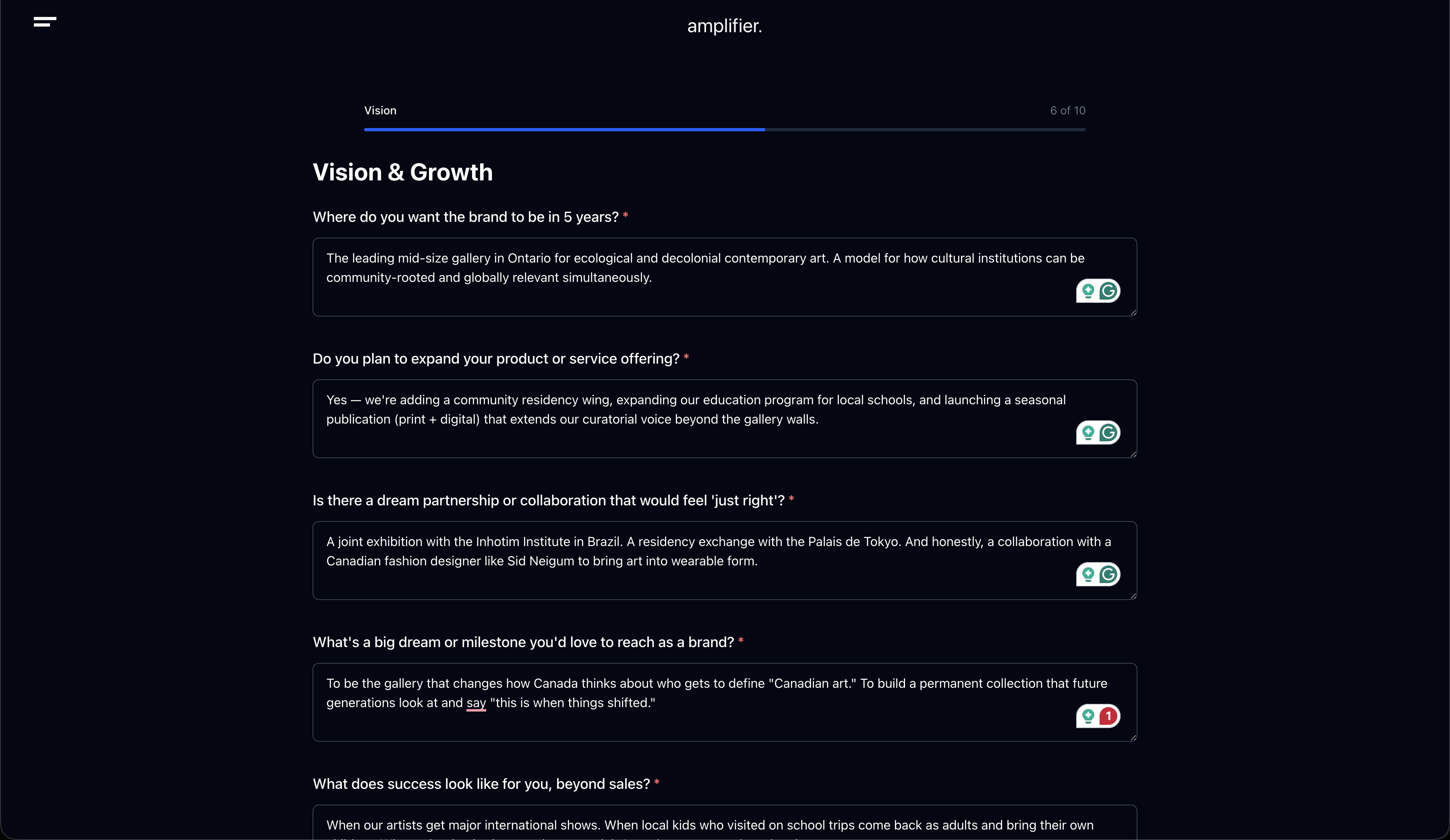

├── Briefing Flow (public)

│ ├── Questionnaire → /api/extract-keywords (Claude Haiku)

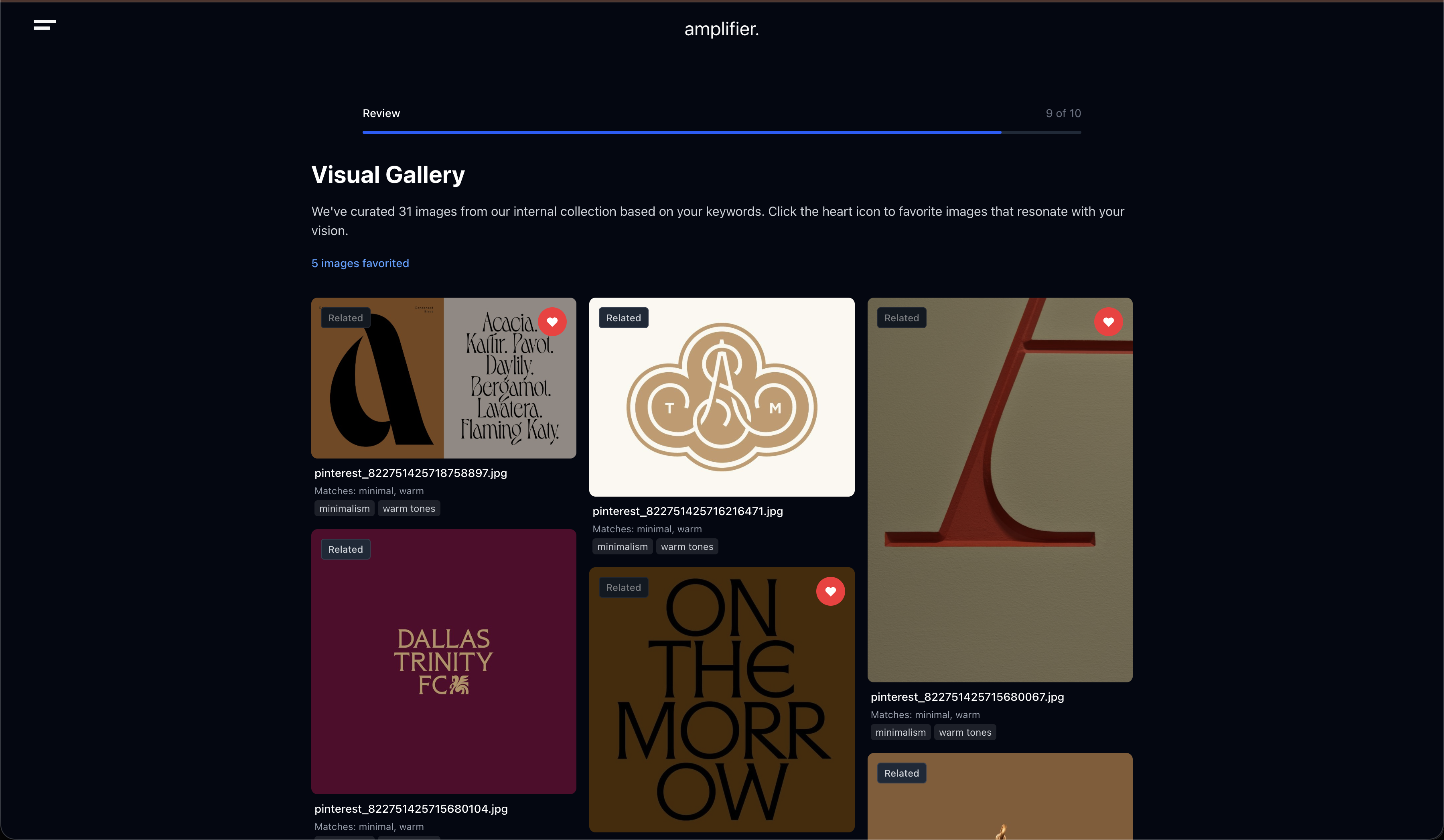

│ ├── Keywords → /api/search-references (weighted DB search)

│ └── Submit → /api/send-briefing (HTML email)

│

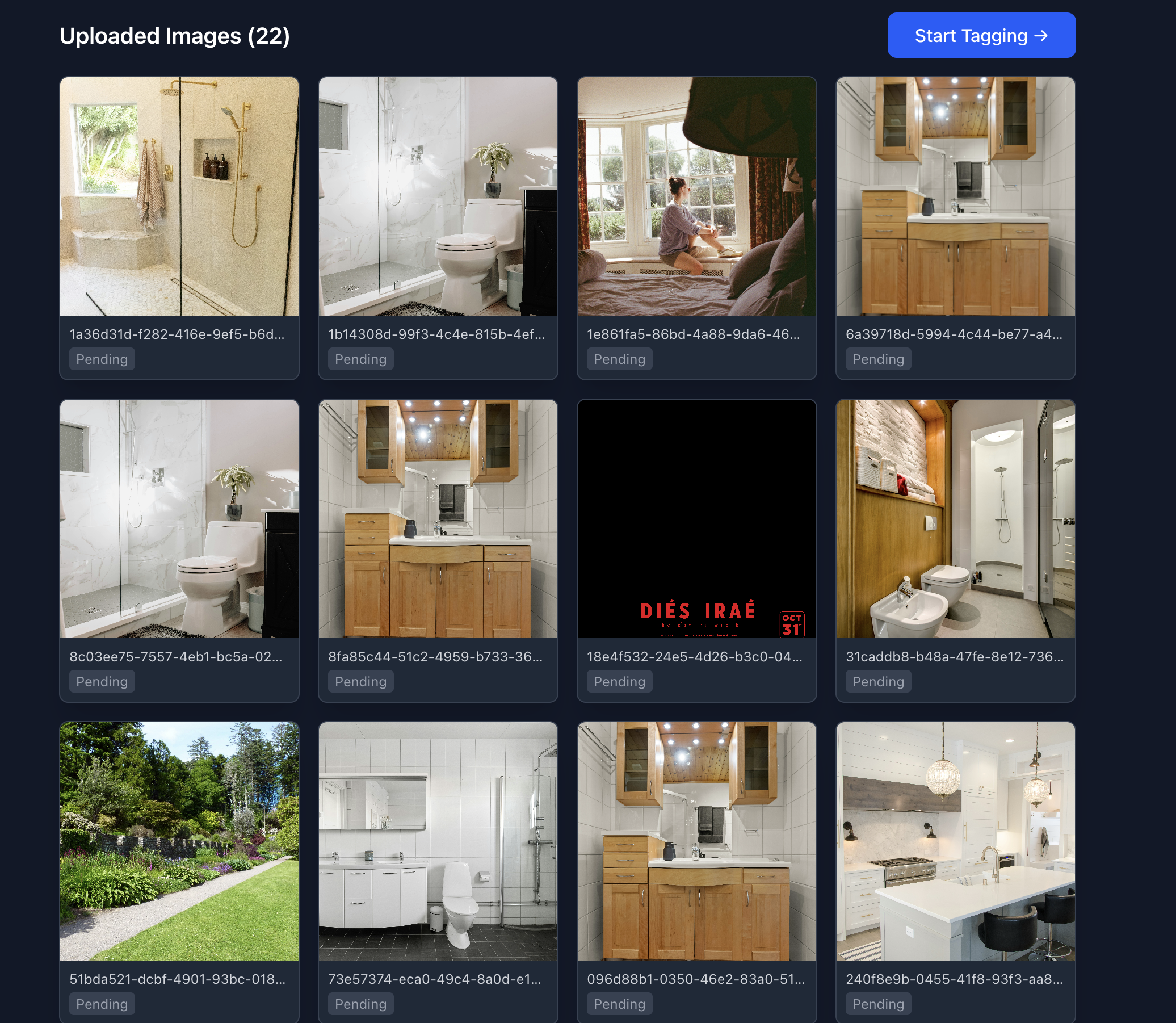

└── Tagger System (auth-protected)

├── Upload → Duplicate Detection (SHA-256 + pHash)

├── Review → /api/suggest-tags (Claude Sonnet Vision + prompt cache)

├── Save → Supabase (images + tags + corrections)

└── Analytics → /api/retrain-prompt (correction analysis)

Supabase

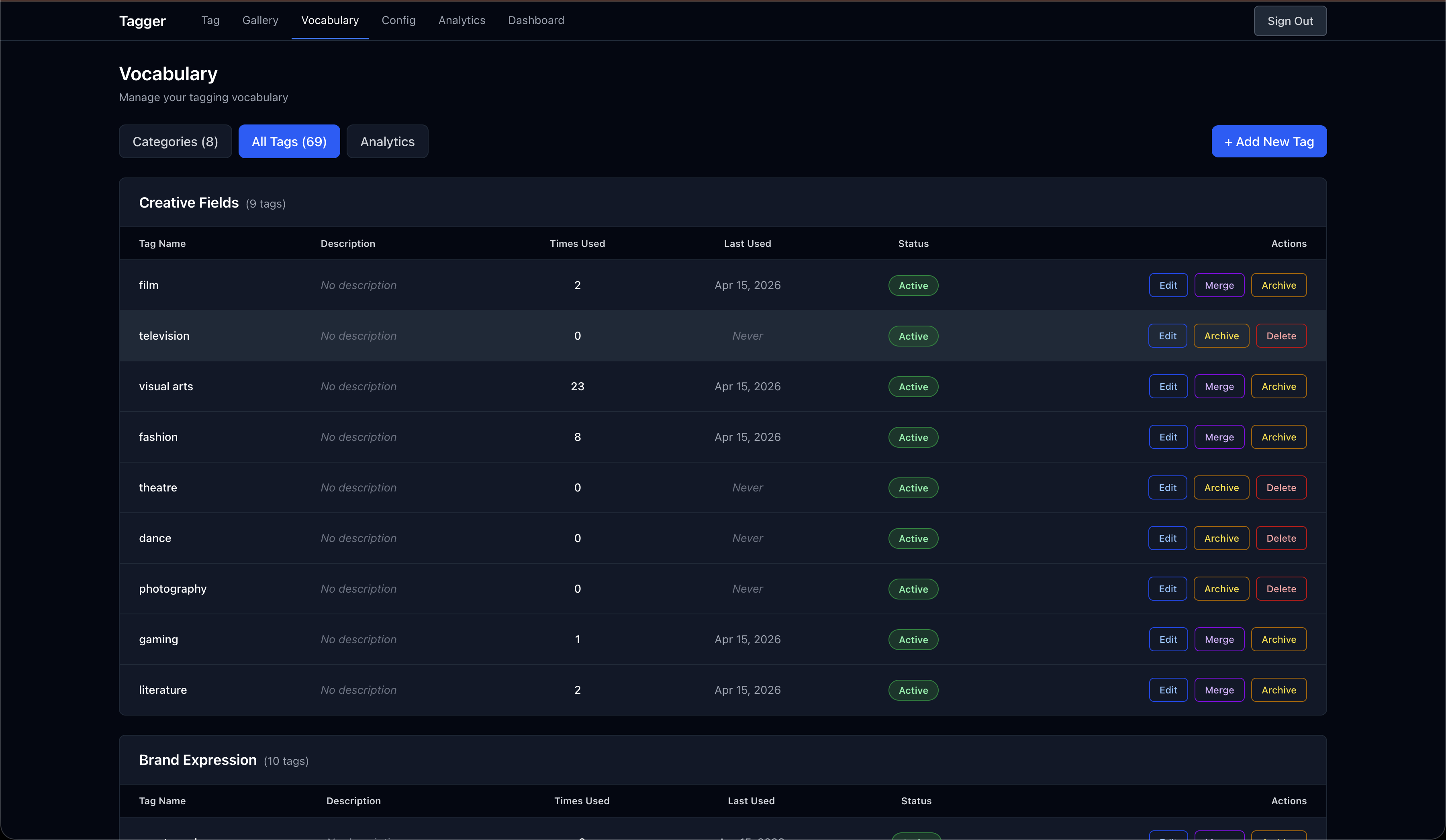

├── PostgreSQL (reference_images, tag_vocabulary, tag_corrections)

├── Auth (email/password, RLS policies)

└── Storage (originals + thumbnails)The vocabulary (which can be large) is placed in the system message with Anthropic's ephemeral cache. The first request in a session pays the full cost, but subsequent image analyses reuse the cached vocabulary — reducing latency by 60-70% and cutting token costs.

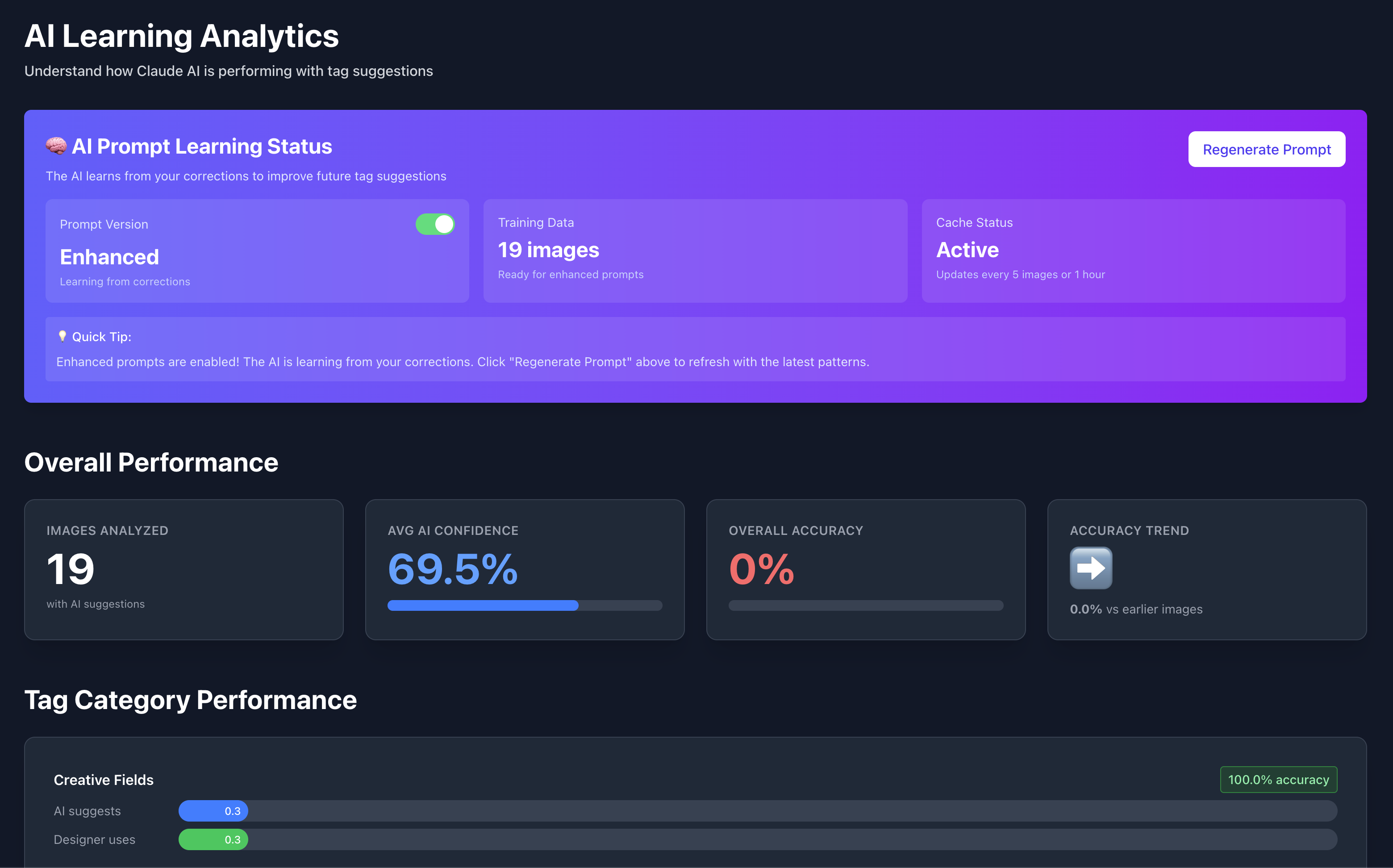

Every time a designer overrides the AI's suggestions, the delta is recorded. The analytics engine aggregates corrections to identify patterns — "you frequently miss the 'tech' tag" or "you over-suggest 'minimal' in 40% of images." These insights are injected into the system prompt for future requests, creating a feedback loop without fine-tuning.

The tagger UI orchestrates 9 custom hooks (useAISuggestions, useImageUpload, useDuplicateDetection, etc.) that each own a single concern. When the entire UI was redesigned from checkbox-based to review-centric, only the view layer changed — every hook stayed untouched.